LazySlide lets researchers search tissue images the way they search genomes

Genomics has its pipelines. Single-cell biology has its atlases. Digital pathology, for all its promise, has mostly had folders full of enormous image files that do not talk to anything else. A new open-source tool called LazySlide is designed to change that - by treating tissue images not as static pictures but as searchable, queryable datasets on par with gene expression matrices.

Billions of pixels, almost no interoperability

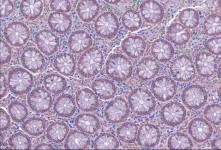

A single whole-slide image of a tissue biopsy can exceed a gigapixel. You can zoom from the full architecture of an organ down to individual cells. The information density is staggering. But unlike a FASTQ file or an expression matrix, there has been no standard way to chop up a whole-slide image, extract quantitative features, and feed them into the same computational ecosystem that drives modern genomics.

The reasons are partly technical and partly cultural. Pathology images sit in proprietary formats. The analysis tools are often incompatible with one another. Connecting what a pathologist sees under the microscope to what a sequencing run reveals about the same tissue requires custom code, manual alignment, and patience. The result is that a vast archive of digitized tissue samples - thousands of them in most academic hospitals - remains largely untapped by the researchers who study gene expression, protein function, and disease mechanisms.

What LazySlide actually does

The tool, developed by a team led by CeMM Principal Investigator Andre Rendeiro and published March 20 in Nature Methods, attacks the problem from three directions at once.

First, it breaks whole-slide images into smaller regions and runs them through AI foundation models - large neural networks pre-trained on massive image datasets. These models extract feature vectors from each patch of tissue, capturing patterns in cell shape, tissue architecture, and spatial organization without requiring anyone to annotate training examples by hand.

Second, it makes those features interoperable with standard computational biology tools. Researchers who already use frameworks like AnnData or Scanpy for single-cell work can slot LazySlide outputs directly into their existing pipelines. That is a practical detail, but a significant one: it means a lab does not have to learn an entirely new software stack to start working with pathology images.

Third - and this is the feature likely to attract the most attention - it connects images to natural language. Using vision-language AI models, LazySlide lets a researcher type a word like "calcification" and see the regions of a tissue slide where visual evidence of that process is strongest, complete with quantitative scores.

Typing "calcification" into a microscope

The natural-language querying deserves a closer look, because it represents a genuinely different way of interacting with pathology data. In a conventional workflow, identifying regions of calcification in a tissue sample requires either a trained pathologist reviewing the slide or a machine-learning model specifically trained on labeled examples of calcification. Both are expensive. Both scale poorly.

LazySlide's approach is what the AI field calls "zero-shot" analysis. The vision-language model has learned general associations between visual patterns and text concepts during its pre-training phase. When a researcher queries for calcification, the system scores each image region based on how strongly its visual features align with the text concept. No task-specific training required.

The team demonstrated this capability in several ways. They showed that LazySlide could identify the organ of origin of tissue samples and distinguish healthy from diseased tissue without being specifically trained for either task. In a more detailed example, they analyzed artery tissue samples with and without calcification, and the system not only separated the two conditions based on image features alone but also revealed which biological pathways - including inflammatory signaling - became visible when image data and RNA sequencing data were analyzed together.

"Histology contains an enormous amount of biological information, but it is often difficult to access computationally," said Yimin Zheng, first author of the study. The goal, Zheng explained, was to let researchers explore tissue images in a systematic, quantitative way and connect visual observations to molecular processes.

Bridging the image-molecule divide

The integration with molecular data is where LazySlide moves from a convenient image viewer to something with broader research implications. The artery calcification example illustrates the point: image features and gene expression data, analyzed separately, each tell part of the story. Analyzed together through LazySlide's framework, they revealed inflammatory signaling pathways that neither data type surfaced on its own.

This kind of multi-modal analysis has been a goal of computational pathology for years, but the practical barriers have been high. Different data types live in different software ecosystems, use different file formats, and require different expertise to handle. By building on the same data structures that genomics researchers already use, LazySlide lowers the activation energy for combining image and molecular data considerably.

Rendeiro framed the ambition plainly: "By treating tissue images as rich datasets rather than static pictures, we can gain new insights into how diseases shape human biology."

What the tool does not do

Several caveats are worth noting. LazySlide depends on pre-trained foundation models, and those models carry their own biases and blind spots. The quality of zero-shot queries depends entirely on what the underlying vision-language model learned during training - if a tissue pattern was poorly represented in the training data, the system may miss it or mischaracterize it. The paper does not claim clinical diagnostic accuracy, and the tool is positioned as a research instrument, not a replacement for pathologist review.

The interoperability advantage also has limits. LazySlide integrates well with Python-based computational biology tools, but labs using other software ecosystems would need adapters. And while the tool handles the feature extraction and analysis steps, it does not solve the upstream problem of slide scanning and image quality - garbage in, garbage out still applies.

The validation examples in the paper, while compelling, involve relatively well-characterized tissue types and disease states. How well zero-shot querying performs on rare conditions, subtle morphological changes, or tissue types underrepresented in foundation model training sets remains an open question.

A missing layer in computational biology

The broader significance of LazySlide may be less about any single feature and more about what it represents: the arrival of tissue imaging as a first-class data type in computational biology. Genomics went through a similar transition when standardized file formats and shared analysis pipelines turned raw sequence data from a specialist bottleneck into a commodity that any bioinformatician could work with. Digital pathology has been waiting for its version of that transition.

Whether LazySlide is the tool that finally triggers it depends on adoption, community contributions, and how quickly the underlying AI models improve. But the architecture is sound, the code is open-source, and the integration points with existing tools are real. For labs sitting on collections of whole-slide images that have never been computationally analyzed, the barrier to entry just dropped.